DeepSeek V4: The 1-Trillion Parameter Behemoth Reshaping AI Economics

The AI landscape has just been hit by another seismic shift. DeepSeek has officially released DeepSeek V4, its long-awaited flagship model scaling to a staggering 1 trillion parameters. Launching today, April 7, 2026, this release marks the most significant milestone in open-weight AI history.

This isn't just about size; it's about a fundamental rethink of how massive models operate, moving from "brute force" compute to surgical architectural efficiency.

The Core Specs: V3 vs. V4

To understand the leap, we have to look at the numbers. Despite being nearly 50% larger in total parameters than its predecessor, V4 is designed to be faster and more cost-effective.

| Specification | DeepSeek V3 | DeepSeek V4 (Flagship) |

|---|---|---|

| Total Parameters | 671B MoE | ~1 Trillion (1T) MoE |

| Active Parameters | ~37B | ~32B (Estimated) |

| Context Window | 128K (later 1M) | 1M+ Tokens (Native) |

| Architecture | Standard MoE | NSA / SPCT / mHC |

| Primary Goal | General Intelligence | Autonomous Engineering & Reasoning |

Architectural Breakthroughs: NSA and SPCT

The leaked "Commentary" details point toward two major technical pillars: NSA (Native Sparse Attention) and SPCT (Sparsity-Preserving Compute Technology).

1. NSA/SPCT Tech

These new architectures promise to "slash costs while maximizing speed." By optimizing the sparse attention mechanism, DeepSeek V4 can process information with lightning-fast inference. This means the model can maintain 1T parameter-level "wisdom" while only activating a tiny fraction of its brain (~32B params) for any given task, keeping energy consumption and latency remarkably low.

2. GRPO-Powered Reasoning

Following the success of the R1 series, V4 integrates GRPO (Group Relative Policy Optimization) directly into its core. This gives math and coding tasks a "turbo boost," enabling a seamless "thinking" mode. Instead of just predicting the next token, the model can internally simulate multi-step solutions before outputting the final answer.

The "Engram" and "mHC" Innovation

Web leaks and recent research papers from the DeepSeek team suggest even deeper innovations:

- Engram Conditional Memory: This technology separates static knowledge (facts) from dynamic reasoning. It uses an O(1) hash lookup for data retrieval, allowing the model to "know" things instantly without wasting reasoning compute.

- Manifold-Constrained Hyper-Connections (mHC): A framework that addresses signal amplification issues in ultra-deep networks. This enables stable training of models at the 1T scale with minimal overhead.

The 1M+ Token Context Window

The V4 release highlights a 1M+ Token Context Window. This isn't just a gimmick; it's a tool for Autonomous Software Engineering. Imagine feeding an entire repository—thousands of files, documentation, and history—into a single prompt. V4's massive capacity makes long-form analysis effortless, outpacing rivals who struggle with "lost in the middle" retrieval issues.

Release Timeline and Market Impact

While early leaks and commentary had suggested an October timeline, DeepSeek has surprised the industry by dropping the full 1T model today. This strategic "early" release positions DeepSeek as the definitive leader in open-weight AI.

Why It Matters

DeepSeek's strategy continues to commoditize the base model. By releasing a 1T parameter model with the efficiency of a much smaller one, they are forcing Western competitors to justify their $100M+ training budgets. If V4 can deliver GPT-5 level performance on a fraction of the hardware, the "Compute Moat" might just have evaporated.

Summary

DeepSeek V4 represents more than just another model release; it's a declaration of efficiency. With its NSA/SPCT architecture and GRPO reasoning, it is set to become the primary engine for the next generation of autonomous AI agents.

The "Explosion" is here.

Analysis based on DeepSeek technical papers, community leaks, and recent news commentary.

Read more

Qwen 3.7 Max: Alibaba's Agent-Grade Reasoning Model

Alibaba's Qwen 3.7 Max is a text-only reasoning flagship with 1M token context, scoring #5 on the Artificial Analysis Intelligence Index and #3 in coding benchmarks.

Meta Muse Spark: A New Frontier in Multimodal Reasoning

Meta's Superintelligence Labs unveils Muse Spark, a natively multimodal reasoning model with multi-agent orchestration and strong performance in health and visual reasoning.

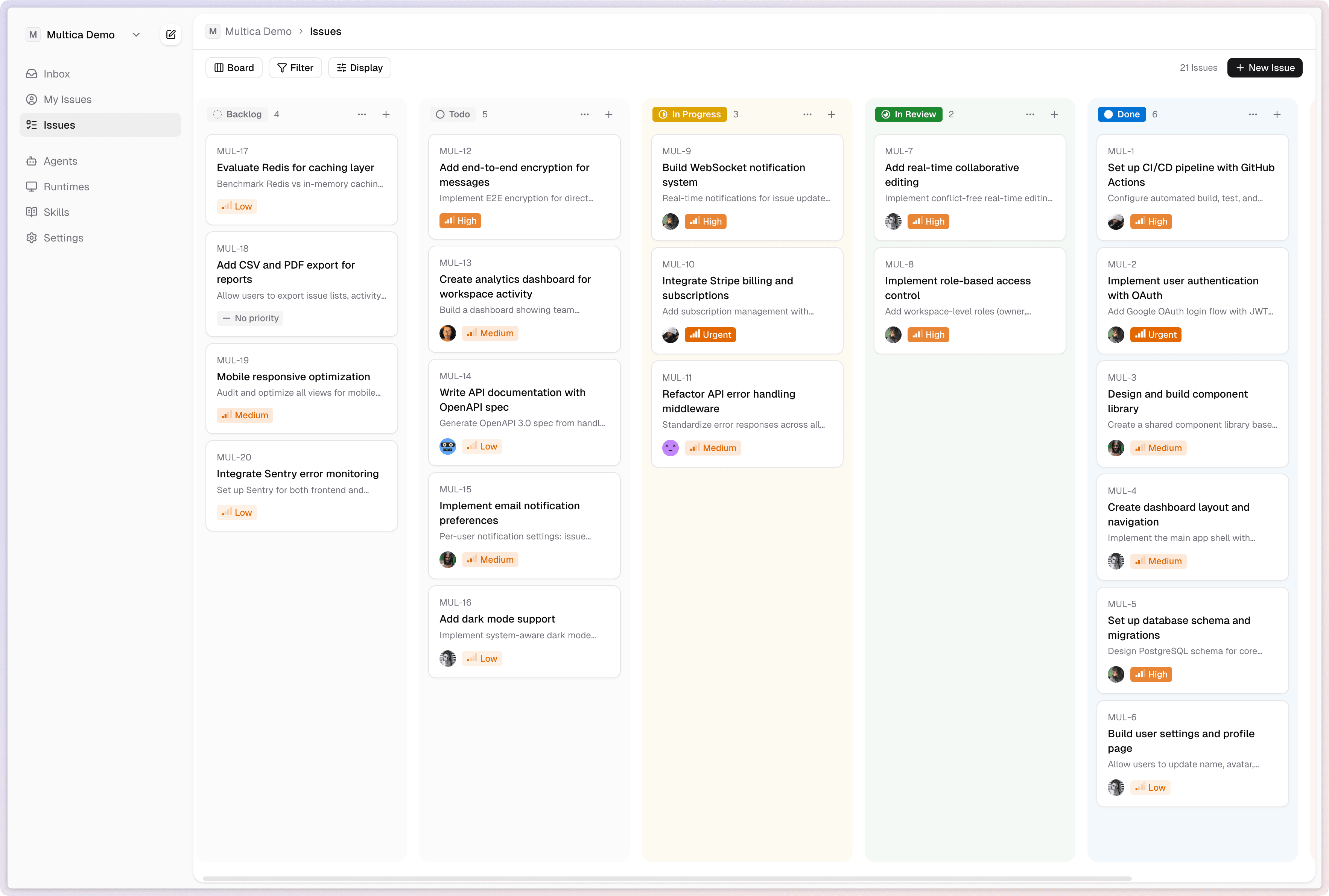

Multica: Turn AI Agents Into Real Teammates

Multica is an open-source platform that manages AI coding agents as full team members - assigning tasks, tracking progress, and compounding reusable skills across your organization.