DeepSeek V4 Leak: 1 Trillion Parameters and 1M Token Context Window

The AI world is buzzing with leaked information about DeepSeek V4, and the numbers are nothing short of staggering. If the benchmarks prove accurate, this could be the most powerful open-weight AI model ever released.

V4 Lite (Sealion-Lite) Is Out

One important update: a smaller variant often referred to as DeepSeek V4 Lite (Sealion-Lite) has reportedly been released/surfaced as of March 9, 2026.

The key point: V4 Lite is rumored to be ~200B parameters, making it far more accessible than the rumored trillion-parameter flagship — and likely intended as an early look at V4-family improvements.

The Leaked Specifications

According to leaked benchmarks, DeepSeek V4 represents a massive leap forward in AI capability:

Parameters and Architecture

- V4 Lite (~200B parameters): A smaller release/surfaced variant (often called Sealion-Lite)

- Flagship V4 (up to ~1T parameters, rumored): The model reportedly scales up toward 1 trillion parameters

- Mixture-of-Experts (MoE): Only ~37B active parameters per token, making it computationally efficient

- MHC Architecture: Novel Multi-Hierarchical Context design for better long-range understanding

Context and Capabilities

- 1 million token context window: Unprecedented ability to process extremely long documents

- Multimodal support: Seamless processing of text, images, and video

- DSA Lightning Indexer: Builds on V3.2-Exp's DeepSeek Sparse Attention for fast preprocessing

Performance Benchmarks

The leaked benchmarks paint an impressive picture:

- 90% accuracy in human evaluations

- 81% on SWE-bench software engineering benchmarks

- Potential to outperform leading models including Claude Opus and GPT-5.4

Pricing Expectations

If the model launches as expected:

- $0.30 per million tokens: Potentially the most cost-effective frontier model

- V4 Lite (Sealion-Lite): A ~200B-parameter variant that reportedly appeared/surfaced on March 9, 2026

Release Timeline

Insider leaks suggest a rescheduled launch in the last two weeks of April 2026. One factor potentially influencing timing: the upcoming Trump-Xi meeting, where demonstrating Chinese AI parity could strengthen negotiating position on chip export controls.

Controversy and Concerns

The DeepSeek V4 story hasn't been without drama. Reports indicate delays in the model's release, raising questions about its readiness. Additionally, DeepSeek faced scrutiny over a potential model swap during a seven-hour outage, highlighting the need for transparency in AI development.

What This Means for the AI Race

If DeepSeek V4 delivers on even half of these specifications, it represents a significant milestone:

- Open-source dominance: DeepSeek continues to challenge proprietary models with open weights

- Efficiency gains: MoE architecture shows it's possible to have massive capability without massive compute costs

- Context revolution: 1M tokens opens new possibilities for research and enterprise applications

The question now is whether these specs will hold up under scrutiny — or if DeepSeek will face the same verification challenges as previous releases.

This analysis is based on leaked benchmarks and industry reports. All specifications are unverified until official release.

Read more

Qwen 3.7 Max: Alibaba's Agent-Grade Reasoning Model

Alibaba's Qwen 3.7 Max is a text-only reasoning flagship with 1M token context, scoring #5 on the Artificial Analysis Intelligence Index and #3 in coding benchmarks.

Meta Muse Spark: A New Frontier in Multimodal Reasoning

Meta's Superintelligence Labs unveils Muse Spark, a natively multimodal reasoning model with multi-agent orchestration and strong performance in health and visual reasoning.

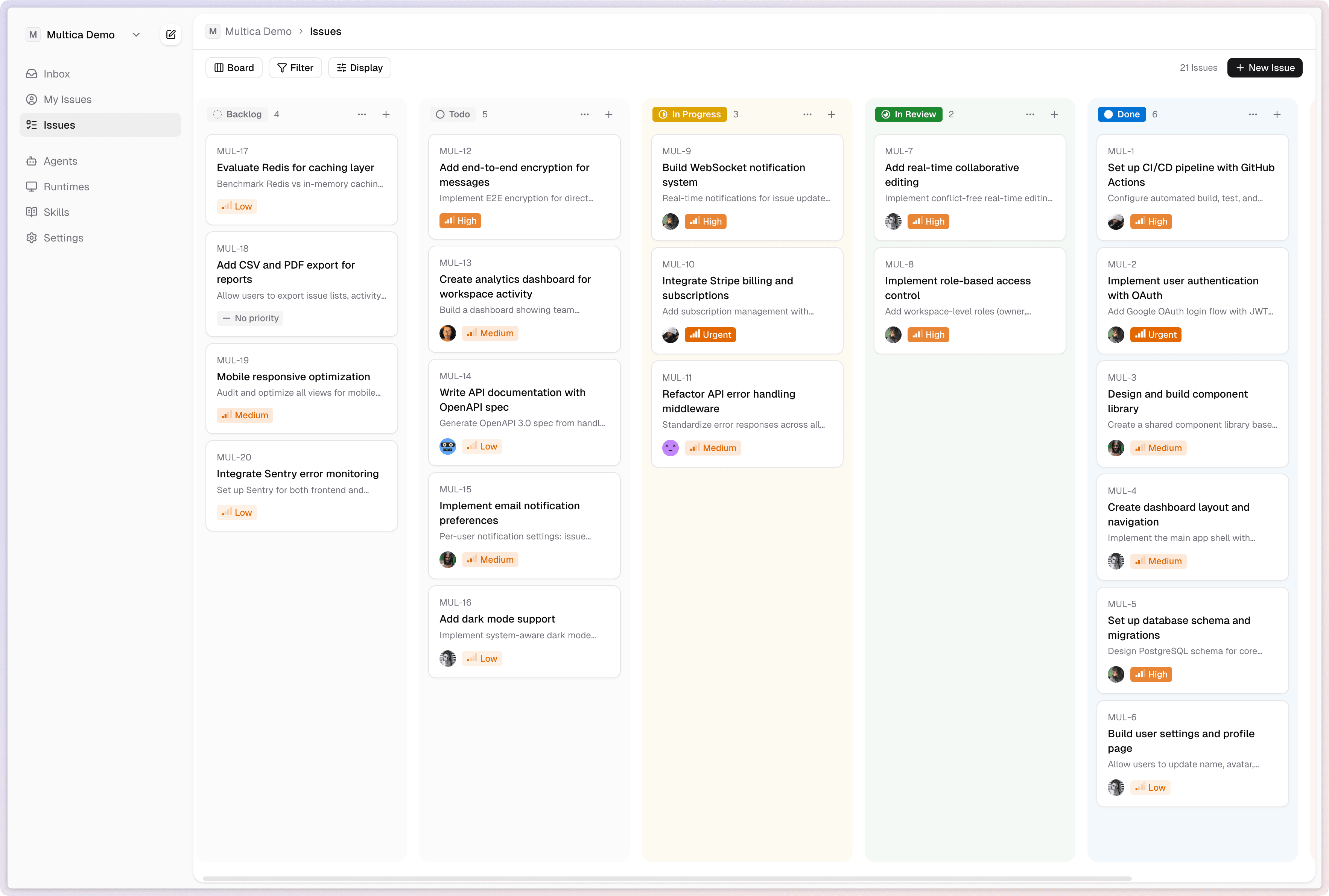

Multica: Turn AI Agents Into Real Teammates

Multica is an open-source platform that manages AI coding agents as full team members - assigning tasks, tracking progress, and compounding reusable skills across your organization.