Zhipu AI Launches GLM-5.1: A New Benchmark for Open-Source Intelligence

Zhipu AI has officially unveiled GLM-5.1, the latest iteration of its flagship large language model series. This release marks a significant milestone in the global AI landscape, as the Beijing-based lab continues to push the boundaries of what open-source (and open-weight) models can achieve compared to their proprietary Western counterparts.

What's New in GLM-5.1?

The jump from 5.0 to 5.1 isn't just a minor patch. Zhipu AI has focused on three core pillars: Reasoning Depth, Contextual Retention, and Developer Workflow Integration.

1. Enhanced Reasoning (CoT+)

GLM-5.1 introduces an improved Chain-of-Thought (CoT) mechanism that reduces "hallucination loops" in complex mathematical and logical proofs. In internal benchmarks, GLM-5.1 showed a 15% improvement in solving competitive programming problems compared to its predecessor.

2. The 200K Context Window

While 1M context windows are becoming the new headline grabber, Zhipu has focused on effective retrieval. The GLM-5.1 200K window boasts near-perfect "needle in a haystack" performance, ensuring that even at maximum capacity, the model doesn't lose track of critical facts buried in the middle of a document.

3. Native Multimodal Integration

GLM-5.1 is natively multimodal from the ground up. This means it doesn't just "see" images via a separate adapter; it processes visual and textual data in the same latent space, leading to much higher accuracy in tasks like architectural diagram analysis and medical imaging interpretation.

Performance Benchmarks

The early numbers are impressive. GLM-5.1 is positioned to compete directly with GPT-4o and Claude 3.5 Sonnet across several key metrics:

- MMLU: 88.4%

- GSM8K: 94.2%

- HumanEval: 86.5%

What makes these numbers particularly notable is the efficiency. GLM-5.1 achieves these results with a significantly smaller active parameter count during inference, thanks to a refined Mixture-of-Experts (MoE) architecture.

The Open-Source Impact

Zhipu AI continues its commitment to the developer community by releasing the weights for the 70B and 12B versions of GLM-5.1.

"Our goal is to democratize high-level intelligence," said a Zhipu AI spokesperson during the launch event. "By providing frontier-level capabilities in an open-weight format, we enable developers worldwide to build specialized agents without being locked into a single proprietary ecosystem."

Why It Matters

The release of GLM-5.1 highlights the rapid acceleration of AI development outside of Silicon Valley. For developers and researchers, it provides a powerful, flexible alternative for building:

- Autonomous Agents: Better reasoning leads to more reliable tool usage.

- Enterprise Search: The high-fidelity 200K context window is ideal for RAG (Retrieval-Augmented Generation) applications.

- Localized AI: Optimized for efficiency, the 12B model can run on high-end consumer hardware.

How to Get Started

Developers can access GLM-5.1 via:

- Zhipu's BigModel API: Available globally with competitive pricing.

- Hugging Face: Weights for the 70B and 12B models are now available for download.

- Local Deployment: Support for

llama.cppandvLLMis already being rolled out by the community.

As we head into the second quarter of 2026, the competition for AI supremacy is tighter than ever. GLM-5.1 proves that Zhipu AI is not just keeping up—they are setting the pace.

This report is based on official technical documentation from Zhipu AI and initial community benchmarking.

Read more

Qwen 3.7 Max: Alibaba's Agent-Grade Reasoning Model

Alibaba's Qwen 3.7 Max is a text-only reasoning flagship with 1M token context, scoring #5 on the Artificial Analysis Intelligence Index and #3 in coding benchmarks.

Meta Muse Spark: A New Frontier in Multimodal Reasoning

Meta's Superintelligence Labs unveils Muse Spark, a natively multimodal reasoning model with multi-agent orchestration and strong performance in health and visual reasoning.

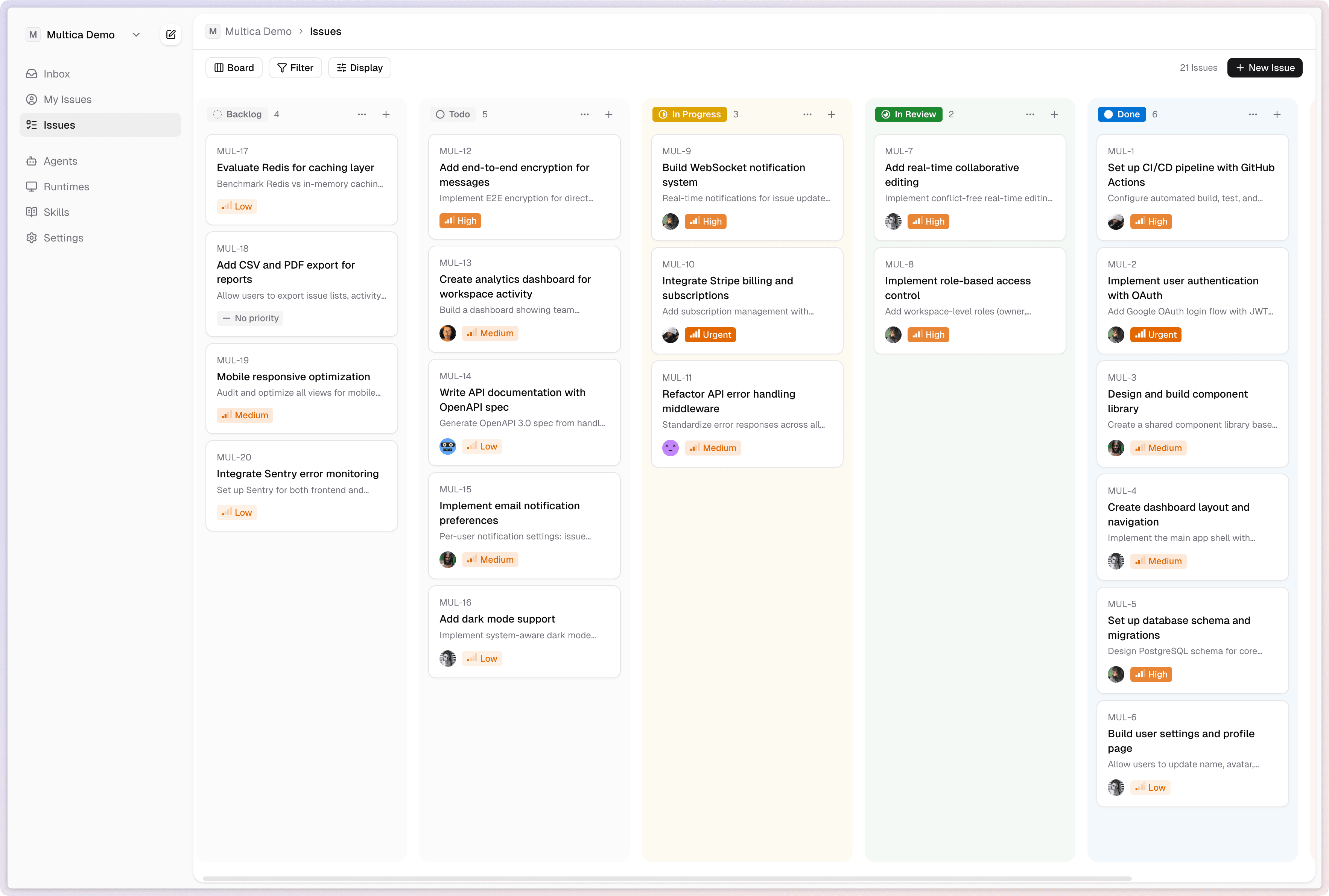

Multica: Turn AI Agents Into Real Teammates

Multica is an open-source platform that manages AI coding agents as full team members - assigning tasks, tracking progress, and compounding reusable skills across your organization.